Clustering for Pseudo-Labels: Grouping Data Before Manual Annotation

Clustering and pseudo-labels have streamlined the data annotation by providing unlabeled data for AI models.

Unsupervised methods generate pseudo-labels to train AI models on unannotated data. This approach is used in semi-supervised learning, where pseudo-labels improve the performance of AI models. However, generating noiseless labels remains a challenge that requires robust clustering methods.

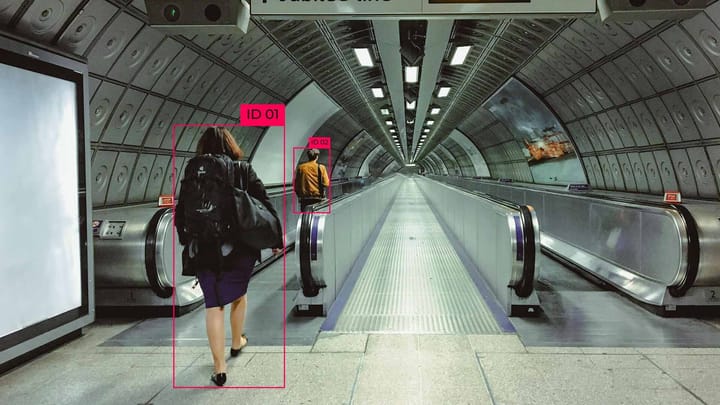

Methods such as dynamic hierarchical clustering demonstrate how dynamic refinement of clusters improves the accuracy of human identification tasks.

Key Takeaways

- Clustering and pseudo-labeling reduce annotation time.

- Unsupervised methods allow for the use of unlabeled data.

- Semi-supervised learning is better performed with high-quality pseudo-labels.

- Clustering methods improve label denoising.

Understanding Semi-Supervised Learning

Semi-supervised learning (SSL) is a machine learning technique that trains an AI model using small amounts of labeled and large amounts of unlabeled data. The SSL algorithm uses labeled data for initial training and then unlabeled data to improve the model's generalization. It is used in speech recognition, computer vision, and biomedical research.

Benefits of Unlabeled Data in Modern Models

Unlabeled data is easy to collect in large amounts, making it possible to train more extensive and complex AI models. This training method is advantageous in cases where labeling the data is an expensive and time-consuming process.

Using unlabeled data helps AI models understand the structure and features of the data. Semi-supervised learning (SSL) and algorithms allow AI models to learn with minimal labeled examples.

Manually labeling data is time-consuming and resource-intensive. With unlabeled data, AI models can find patterns without human intervention.

The Role of Unlabeled Data in Improving Model Performance

Deep learning models recognize important features from unlabeled data using contrast learning and prototype-based methods. These approaches allow AI models to distinguish high-level features like textures and shapes in images without labels.

Studies have shown that AI models trained on unlabeled data achieve better accuracy. This proves the impact of unlabeled data in reducing reliance on costly manual annotation processes.

Creating quality pseudo-labels

The quality of pseudo-labels determines the performance of an AI model and the quality of its training. High-quality labeled data reduces noise and improves the accuracy of AI models without human intervention.

Let's consider techniques for ensuring quality in pseudo-labels:

Recent studies have demonstrated that these methods improved performance on qualifying tasks.

Clustering and Pseudo-labeling for Data Annotation

Unsupervised grouping techniques help automatically cluster similar objects, reducing the need for extensive manual labeling.

Clustering is a method of grouping similar objects into clusters without labels. It allows you to find structure in the data to use for annotation. In data annotation, this method helps:

- Automatically grouping similar objects reduces the number of unique samples that must be manually labeled. It speeds up the annotation process because annotators label only selected samples instead of labeling all the data.

- Detects anomalies and unusual samples that require separate labeling.

- Pseudo-labeling is a method where an AI model predicts labels in unlabeled data, which are then added to the training set. Pseudo-labeling helps expand the training set and teaches the AI model to generalize from the data.

Creating and Training Semi-Supervised Models

A ladder network is a neural network that uses an autoencoder to learn to classify labeled data and recover unlabeled data simultaneously. The principle of operation includes:

- Encoding. Input labeled and unlabeled data are passed through a convolutional or fully connected neural network.

- Noising. Noise is added to unlabeled samples to test the stability of the AI model.

- Decoding. Recovering the original input data through a symmetric decoder network.

- Calculating losses. Three indicators—classification, reconstruction, and consistency losses—are taken into account to investigate the quality of the work of the previous stages.

Consistency loss method

Consistency loss is a regularization strategy in semi-supervised learning. It ensures that the AI model gives stable predictions even when the input data changes. The principle of its operation:

- Original input. Transferring unlabeled data to the AI model.

- Modified input. Change the same data, for example, by adding noise.

- Compute the difference. Compare the outputs of an AI model for two variants of the same sample.

Combining clustering and classification losses

Classification losses train the AI model to correctly recognize labeled examples and compare the predicted classes with the real labels. Thus, they provide accurate training on labeled data.

Clustering losses are added to unlabeled data to organize the latent space. Allows you to use unlabeled data for training.

Combining classification and clustering losses allows you to use labeled and unlabeled data. In combination, these methods give a better generalization of the AI model and reduce the dependence on a large amount of labeled data.

Deep Learning Approaches to Unsupervised Clustering

Contrast learning focuses on similarity measures and improves how AI models represent features. This method is used to learn data representations, especially in unsupervised or semi-supervised learning tasks with limited access to labeled data. In contrast learning, an image can be given two similar and two dissimilar images. The AI model must learn to distinguish between these pairs, creating representations close together for positive pairs and distant apart for negative pairs in latent space.

Transformer-based models in image recognition

Transformer models capture long-term dependencies, making them ideal for processing sequential data. Key advantages:

- Dependency modeling. Transformers can capture dependencies between different parts of an image, allowing for the recognition of complex patterns and objects.

- Flexibility. The self-attention mechanism allows you to work with any image and adapt to different tasks, such as classification, segmentation, or detection.

- Scalability. Transformers are scalable for large datasets and deep architectures, which allows them to achieve high results with large and complex images.

Future Trends in Clustering and Unsupervised Learning

Future trends focus on integrating deep learning and machine learning to process large amounts of unstructured data such as text, images, and audio. Transformers and pseudo-labeling techniques allow AI models to be adapted without large amounts of labeled data.

The development of cloud computing technologies allows these techniques to be scaled to handle big data. This improves the quality of clustering and machine learning in industries such as financial technology and healthcare, where fast and accurate information processing is required.

FAQ

What is pseudo-labeling, and how does it improve semi-supervised learning?

Pseudo-labeling is a technique that assigns labels generated by a model to unlabeled data. This method improves semi-supervised learning by providing unlabeled data that provides robustness to the AI model.

How does clustering help improve AI model performance?

Clustering groups with similar data points allows the AI model to learn from patterns in those groups.

What are the key differences between semi-supervised and unsupervised learning?

Semi-supervised learning uses labeled and unlabeled data, while unsupervised learning uses only unlabeled data.

How does contrast learning improve feature similarity?

Contrast learning teaches AI models to distinguish between similar and dissimilar data points.

What is the role of transformer-based models in image recognition?

Transformers in image recognition process data globally and take into account long-range dependencies.

How does the process of clustering and pseudo-labeling work?

The data clustering process identifies patterns, generates pseudo-labels, and improves them through iterative learning.

What future trends can we expect in clustering and unsupervised learning?

Emerging trends include semantic pseudo-labeling, which focuses on labels, and advanced deep learning architectures that improve representation learning and data usage.

Comments ()